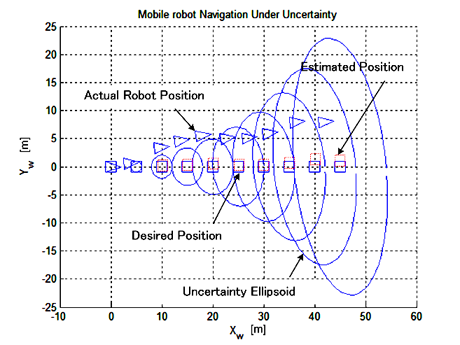

Fig. 1.

Uncertainty ellipsoids with the movement of a

mobile robot.

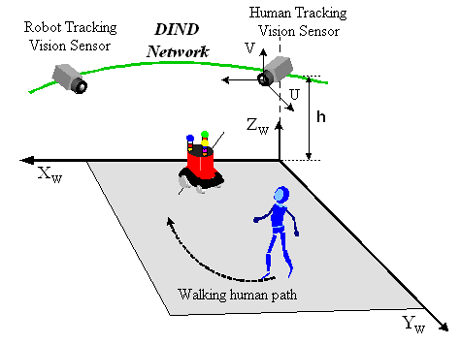

2. Image Projection of Walking human

During navigation, a mobile robot may need to re-locate its position. When there is a walking human that can be captured by the CCD camera of DINDs and the motion information on the walking human is available to the mobile robot, it may stop at its current position to improve the position estimation accuracy of itself by observing the walking human. The given object trajectory can be represented as a linear equation in the image frame, and using the current position estimation of the mobile robot, geometric constraint equations can be derived through coordinate transformation. The derivation procedure of geometric constraint equations is going to be illustrated with an example shown in Fig.2.

Fig. 2. Coordinates for a walking object and a mobile robot.

3. Position correction

The calculated position of the walking human in the image frame, based on the estimated robot position, has some discrepancy from the actual value. Utilizing this error, the practical position of the robot can be corrected recursively. To overcome vague input information, i.e., the human position in the image frame includes noise and the position estimation of the robot has uncertain components, the Kalman filtering technique is adopted to form a robust observer. The geometric constraint equations between the human image coordinates and the robot position are approximated to a linear system equation, and the Kalman filtering technique is applied to estimate the robot position.